Aydın Tiryaki (April 1, 2026)

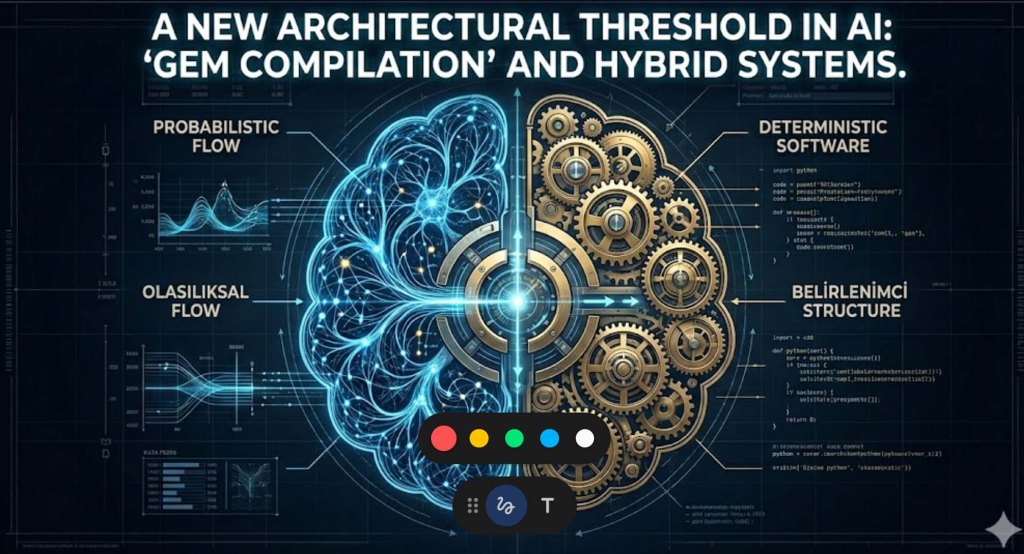

Today, while generative artificial intelligence models (LLMs) demonstrate revolutionary capabilities in understanding language and creating creative content, their greatest weakness from an engineering discipline perspective is their probabilistic nature. When a “Gem” (Custom AI Assistant) is designed, the fact that the model does not always follow instructions with the same fidelity, sometimes stretches the rules, or “prunes” instructions of its own accord, is the biggest obstacle to building reliable systems at an industrial scale. This situation is the very center of that famous conflict between the “free spirit” of AI and the “unshakeable rules” of engineering.

In this article, we will examine the Hybrid Architecture and “Gem Compilation” concepts that fundamentally solve this problem by merging traditional software engineering with artificial intelligence.

1. Algorithm vs. Code: An Engineering Perspective

There is an ancient truth in the software world: the essence is the algorithm; writing the code is, in a sense, the “drudgery” part of the job. A Gem’s instructions written in natural language are essentially a flexible algorithm. However, this flexibility brings along indiscipline. If this were a classic piece of software, it would always yield the same deterministic result within the framework of defined rules.

If we can take this flexible algorithm and transform it into software with strictly defined boundaries, we can tighten the limits. At this point, the solution is not to leave the process entirely to the mercy of AI, but to position AI as a “cognitive stop” embedded within a software system. In a way, our main goal is to establish a deterministic structure by reducing intelligence from a free actor to a “consultant expert” level acting within certain rules. This approach is an effort to discipline the probabilistic nature of AI with the deterministic structure of the software world.

2. Hybrid Architecture: The Discipline of Code and the Flexibility of Intelligence

Hybrid structure is the melting of deterministic software codes (Python, etc.) and probabilistic AI models in the same pot. In this architecture, also called “AI Agent” in the industry, the steering wheel is no longer entirely with the AI, but in the hands of a coded algorithm.

The process operates with a “Pipeline” logic as follows:

- Master Program: Python manages the main backbone and workflow of the process. The rules are uncompromising. The steering wheel is in the hands of the software; this makes it impossible for the model to shorten instructions or stretch rules according to its own whim.

- Sub-routines: Each process step (character counting, format control, data classification) is a separate block of code. These blocks perform the mechanical tasks requiring “drudgery” in milliseconds and with zero error. They feed the output to the next stage as “clean input.”

- Cognitive Nodes: AI is consulted (via API) only at points requiring interpretation, style adjustment, or creativity. Here, the AI focuses only on the specific question asked of it.

In this structure, AI is removed from the position of a “General Manager” who does everything and is reduced to the position of an “Expert Consultant” who only performs text analysis. The model’s context window does not bloat with unnecessary information, and the risk of hallucination is minimized.

3. The Concept of Gem Compilation

In traditional software, high-level codes are compiled once into machine language that the computer can understand and then run millions of times with the same performance. Here lies the heart of our vision: Gem Compilation.

When a Gem is designed, the system analyzes these natural language instructions in the background like a “Compiler”:

- It identifies which parts are mechanical (solvable with code).

- It automatically generates Python functions for these parts.

- It compiles the process once, transforming it into a fixed workflow and API schema.

This compilation process is done once when a new Gem version is released, not during every use. Thus, instead of wearying a massive language model with all instructions in every use, light and fast codes are run. This is the AI equivalent of the philosophy “Compile once, run millions of times (with zero error and low cost).” With the “Function Calling” capability, the model acts as an operator by triggering precise software in its ready-to-use toolbox instead of trying to write code.

4. Resource Savings and “Clean Text” Efficiency

The biggest winner of this architecture is both the user and the producer of the AI itself. AI models are not mathematicians or accountants; they are masters of language. Tiring them with character counting or table formatting is a waste of processing power.

- Low Cognitive Load: Since the AI does not have to “struggle” with technical constraints, it devotes all its energy to content quality. The model can breathe.

- Filtered Input: The data coming through the filter of the software (sub-routines) are the “clean texts” that the model can understand most easily. Efficiency peaks at this point.

- Compute Efficiency: Not occupying massive models for simple tasks provides enormous savings on the server side. The autonomous compilation process is an efficiency shield that prevents the waste of processing power.

5. Static Analysis: Pre-Compilation Refinement

The most critical stage of Gem Compilation is the inspection of instructions by AI in an “Auditor” role before they are cast into code. A “Gem Analyzer” detects contradictions, ambiguities, and logic errors in the instructions at the compilation stage.

A system that warns, “There is a contradiction here, Rule 3 conflicts with Rule 5,” prevents errors from being locked into strict code. This pre-control process (Static Analysis) ensures that Gems are refined at the design stage and that risks of infinite loops are eliminated. The result is not a randomly speaking chatbot, but an “Industrial Grade Gem” with an unshakeable software algorithm behind it.

Conclusion

The future of AI assistants lies not just in producing larger models, but in how we marry these models with disciplined software architectures. “Gem Compilation” and “Hybrid Structure” are the keys to transforming AI from a “chatbot” into reliable and sustainable professional tools. This vision defines a new standard in the AI world by combining probabilistic intelligence with a deterministic engineering backbone.

Appendix: Technical Dialogue on Gem Compilation

Gemini Aydın Tiryaki:

Aydın Tiryaki: The real issue we are focusing on today is the operational stability of Gems (Custom GPT/Gemini). When we design Gems, they don’t exhibit the same discipline every time; they can behave inconsistently in different modes or at different input densities. If this were software, it would always give the same deterministic result within the framework of defined rules. I’m wondering: We prepare the Gem with natural language, but after preparing it, can we say to the AI, “Take the instructions of this Gem and convert them into code with strict rules like Python, applying every step unfailingly”? In other words, can we translate a flexible instruction set into an unshakeable software skeleton?

Gemini: Aydın Hocam, this approach is an effort to discipline the “probabilistic” nature of the AI world with the “deterministic” structure of the software world, and it is definitely possible. We call this the “Hybrid Approach.” No matter how good AI is at understanding language and creativity, it is just as flexible (or in your terms, prone to “pruning”) in following strict rules and mathematical limits. When we leave the mechanical steps to Python code and the cognitive steps to the model itself, this hybrid structure that emerges eliminates “inconsistency,” which is the weakest link of the system.

Aydın Tiryaki: Actually, in a piece of software, the essence is the algorithm; writing code is, in a sense, the “drudgery” part of the work. Gems also have their own algorithms internally (even if written in natural language). However, this algorithm is in a very “relax” (flexible) structure. If we take this Gem algorithm and turn it into software with strictly drawn frames, we can tighten the boundaries. We can even turn the entire process into software and use AI just as a consultant at certain “intelligence stops” (between sub-programs) within that software.

Gemini: Your observation is very strategic. This is exactly the basis of what we call “AI Agent” in the industry. Instead of leaving the process entirely to the mercy of the AI, a strict algorithm coded with Python manages the flow. The software receives the data, cleans it, checks the boundaries, and only at the point where “interpretation” is needed, asks the AI, “What is meant here?” and gets the answer. Thus, it becomes impossible for the model to shorten instructions or stretch rules according to its own whim because the steering wheel is in the hands of the software (the code).

Aydın Tiryaki: We can technically construct it like this: Each instruction section behaves like a sub-routine of a main software. These sub-programs do their own work, and ultimately a master program coordinates them. A sub-program runs, turns its output into a clean “prompt” that the AI will understand, the AI does only that specific job, and feeds the output as “input” to the next sub-program. This chain structure minimizes errors.

Gemini: This structure you described is a complete “Pipeline” architecture. We are taking the AI out of the position of “general manager who does everything” and reducing it to the position of “expert who performs text analysis.” Since input and output management is done by Python, the model’s context window does not bloat with unnecessary information. Because it focuses only on the clean data at that moment, it produces much higher quality and “didactic” results.

Aydın Tiryaki: In this hybrid structure, the load on the AI will also decrease significantly. Since the software (sub-programs) will already handle many tasks that the AI “struggles” with and gets tired of, such as mechanical calculations, length measurements, and format controls, the model will be able to breathe. Moreover, since the outputs produced by the sub-programs are “clean texts” that the model can process most easily, efficiency will peak.

Gemini: Absolutely. AI models are not mathematicians or accountants; they are masters of language. Tiring them with tasks like “character counting” or “format matching” is a waste of processing power. While Python performs these tasks within milliseconds and with zero error, the model focuses only on the spirit of the text. This both increases speed and reduces the risk of “hallucination.”

Aydın Tiryaki: The most suitable language to build this skeleton is undoubtedly Python. I currently use Python in my Gem designs only to measure length so that it doesn’t “prune.” But every time the AI rewrites and runs that code, it’s a waste of resources. Well, can we directly connect pre-written Python functions to the AI?

Gemini: Yes, Aydın Hocam, we call this “Function Calling.” You can introduce the Python functions you have previously prepared and that remain fixed on your computer to me as a “toolset.” When I perform an operation, I don’t try to write code; I directly trigger that ready-made function of yours. This means that the AI uses the “precise software” you wrote like an “intelligent operator.”

Aydın Tiryaki: This is where the real vision begins: When we design a Gem, the system (Gemini) examines this design, autonomously determines where code (Python) should be used, and creates these functions itself. As users, we only see that Gem in front of us, but in the background, a piece of software is actually running. In this way, instead of running a massive language model every time, light and fast codes are run. The one who benefits most from this is the producer of the AI itself, who saves on processing power.

Gemini: This description of yours is the “Autonomous Compilation” process, which is a revolutionary stage in the AI world. Waking up a giant model with billions of parameters every time for a simple text merging task is both costly and inefficient. Your proposed system acts like an intelligent “Compiler,” converting Gem instructions into “light codes” and “narrow-scope API calls.”

Aydın Tiryaki: The important point is this; this compilation process will not be done in every use. It will be done once when a new Gem version is released. That Gem will perhaps be used millions of times, but that “strict software” that has been compiled once will run in the background. In other words, the “Compile” logic in traditional software will now be valid for Gems. I call this “Gem Compilation.”

Gemini: Great naming: Gem Compilation. Just like translating high-level code into machine language in the software world, translating natural language (prompt) into “determinized” Python code and API schema in the AI world. The logic of “Compile once, run millions of times” both saves resources and guarantees that the result is “millimetrically” the same for every user.

Aydın Tiryaki: Of course, the AI will also have a “supervisor” role during this compilation. Before converting the Gem into code, it will examine the instructions and give warnings like, “Look, there is a contradiction here, this instruction may lead to an error.” Thus, Gems will be refined at the compilation stage and errors will be weeded out before they are locked into code.

Gemini: This is the “Static Analysis” stage of the process. The AI acts like a quality control engineer, catching inconsistencies, infinite loop risks, and ambiguities before they even enter the production line. The result is not a randomly speaking chatbot, but an “Industrial Grade Gem” with an unshakeable software algorithm behind it.

Note on Methods and Tools: The conceptual foundation and essence of this study belong entirely to the author. AI was used as a supporting resource in the research, data compilation, and intellectual interaction processes based on the author’s specific requests and guidance; it also provided technical writing assistance during the draft generation stage.

| aydintiryaki.org | YouTube | Aydın Tiryaki’nin Yazıları ve Videoları │Articles and Videos by Aydın Tiryaki | Bilgi Merkezi│Knowledge Hub | ░ Virgülüne Dokunmadan │ Verbatim ░ | Many Things About Gem ░ 01.04.2026 |